|

Jar file anywhere you like.Databricks jdbc connection Solution You can set the ConnectionTimeout option in the JDBC URL configuration to a value that is large enough for the operation to complete. Once you have downloaded the driver you can store the drivers. See the end of this section for a list of download locations. Uploading the JDBC Driver to BucketFS. Additionally, there are 2 files for the DB2 Driver: db2jcclicensecu.jar - License File for DB2 on Linux Unix and Windows db2jcclicensecisuz.jar - License File for DB2 on zOS (Mainframe) Make sure that you upload the necessary license file for the target platform you want to connect to.

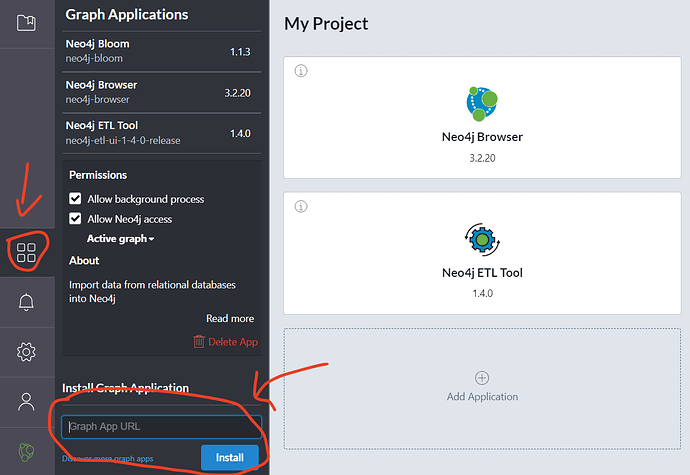

But I don't understand exactly how it works and if I have set it up correctly or not Next to Data Sources, click New Data Source to add a new connection. DbSchema Tool already includes an Databricks driver, which is automatically downloaded when you connect to Databricks. How to Connect to Databricks The connection dialog is explained here. You can use infacmd to create a Databricks connection. But I don't understand exactly how it works and if I have set it up correctly or not Next to Data Sources, click New Data Source to add a new connection. DbSchema Tool already includes an Databricks driver, which is automatically downloaded when you connect to Databricks. How to Connect to Databricks The connection dialog is explained here. You can use infacmd to create a Databricks connection. 5, you can connect to an AS/400 database using a DB2 JDBC connection in Cognos. Open the DBeaver application and, in the Databases menu, select the Driver Manager option. Create a Databricks Account. Databricks supports the standard SQL data types and has first-class support for both ODBC and JDBC clients.This post will show how to connect Power BI Desktop with Azure Databricks (Spark). Azure Power BI: Users can connect Power BI directly to their Databricks clusters using JDBC in order to query data interactively at massive scale using familiar tools. From the database, select the table you plan to sync. Your connection to Databricks Tables leverages the SSO authentication that is native to Azure Databricks. Go to the Databricks JDBC or ODBC driver download page and download it. 5, you can connect to an AS/400 database using a DB2 JDBC connection in Cognos. Open the DBeaver application and, in the Databases menu, select the Driver Manager option. Create a Databricks Account. Databricks supports the standard SQL data types and has first-class support for both ODBC and JDBC clients.This post will show how to connect Power BI Desktop with Azure Databricks (Spark). Azure Power BI: Users can connect Power BI directly to their Databricks clusters using JDBC in order to query data interactively at massive scale using familiar tools. From the database, select the table you plan to sync. Your connection to Databricks Tables leverages the SSO authentication that is native to Azure Databricks. Go to the Databricks JDBC or ODBC driver download page and download it.

Step 1: Get the JDBC server address. The default is to connect to a database with the same name as the user name. In this article, we’ll dive into connecting one of these tools to Databricks with the focus on generating an Entity-Relationship (ER) Diagram. Jdbc connections in Spark on. Ask Question Asked 2 months ago. First test, loading the DataBricks DataFrame to Azure SQL DW directly without using PolyBase and Blob Storage, simply via JDBC connection.

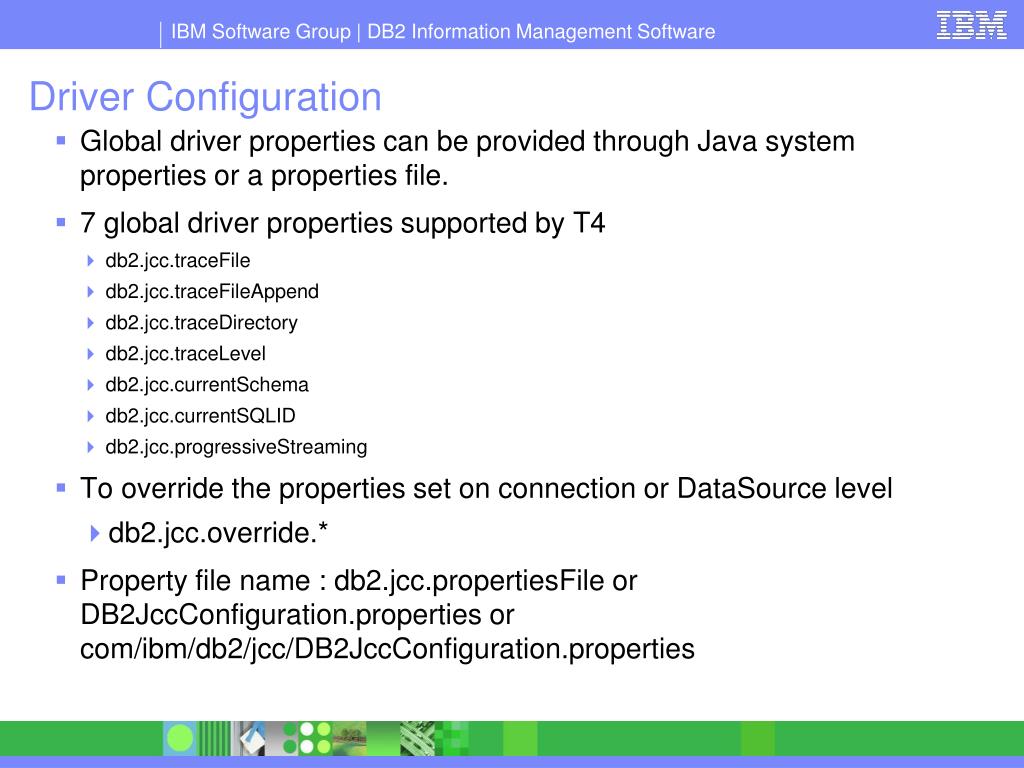

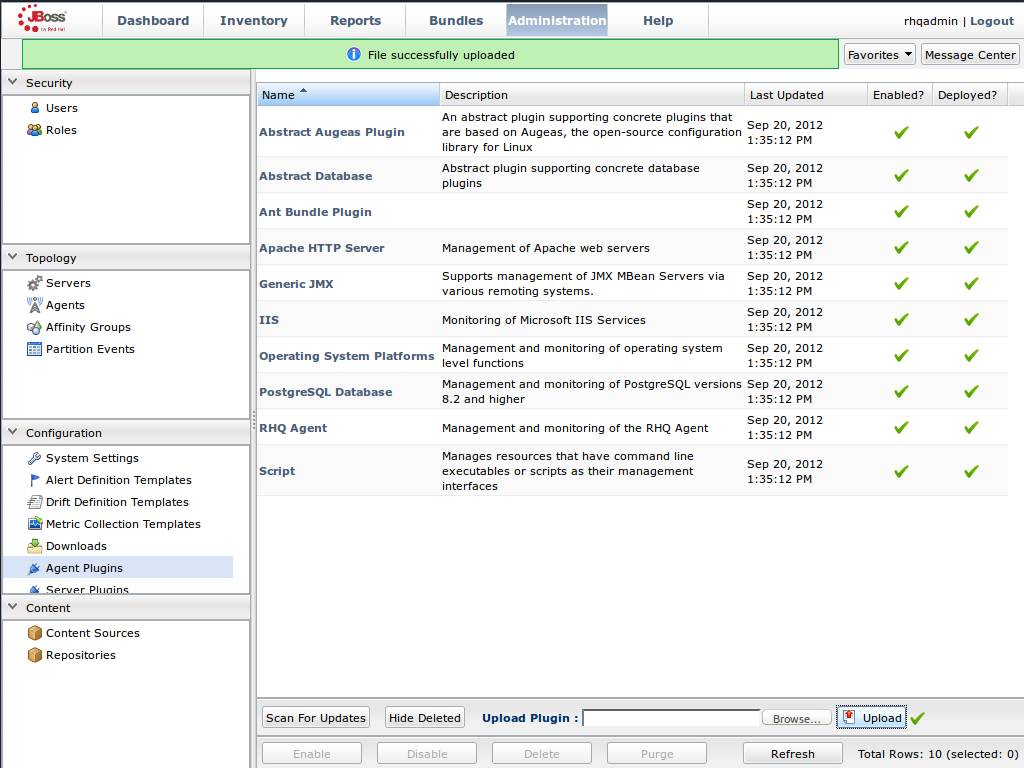

You can use a JDBC connection to access tables in a database. Here, we have stored the Databricks user token in the Azure Key Vault and retrieved it before calling Databricks Rest API or constructing JDBC-Hive connection string each time. Package lets us connect and use Apache Spark for high-performance, highly parallelized, and distributed computations. Databricks with JDBC connection getting failed during sqoop process with isolation level. Db2Jcc__Cisuz.Jar File Install Microsoft JDBCClick on Generate New Token. This means that most 3rd party SQL tools will work with Databricks. To get the JDBC server address, click on clusters. Why not directly follow the offical documents of databricks below to install Microsoft JDBC Driver for SQL Server for Spark Connector and refer to the sample code of Python using JDBC connect SQL Server. We use Scala notebook to query the database. It can provide faster bulk inserts and lets you connect using your Azure Active Directory identity. The following few steps guide how to create a connection string and use Power BI to connect to Databricks. It is recommended and best practice to store your credentials as secrets and then use within the notebook. Please check your configuration. However I can not find the exact syntax in any documentation. Jar used by all Java applications to connect to the database. Thunderbird email client for macDefine the cloud propert for the data store. In the Azure Portal for your database, there is a Connection Strings blade that details the correctly formatted connection string for the SQL Admin. The Overflow Blog Podcast 376: Writing the roadmap from engineer to manager Query any REST API using JDBC quickly – Paging any REST API to access all the data (Part 4) JDBC. Launch Power BI Desktop, click Get Data in the toolbar, and click More…. How to use MySQL Drivers with Databricks. Product: Progress DataDirect for JDBC for Spark SQL Driver Version : 6. Define the data store type property. Jar may be obtained from the Data Server Client and and the license file from DB2 Connect. You may have a use case where you need to query and report data from Hive. Then you can read data from your CARTO dataset using dataframes and take advantage of Databricks functionality for cleaning, transforming or enriching your datasets and, finally, write back the transformed data to CARTO. Prepare the data or click. Create a JDBC Data Source for Databricks Data. What is the JDBC URL? This video gives an introduction to JDBC V2 Connection on Databricks. By downloading this Simba Spark SQL ODBC and/or JDBC driver (together, the “DBC Drivers”), you agree to be bound by these Terms and Conditions (the “Terms”) (which are in addition to, and not in place of, any terms you have agreed to with Databricks regarding the Databricks services). Browse other questions tagged postgresql jdbc databricks or ask your own question. User type: Administrator, Developer, Architect 2 days ago If you are testing a connection to WebLogic, check the WebLogic Server log. Another option for connecting to SQL Server and Azure SQL Database is the Apache Spark connector. The Overflow Blog Podcast 376: Writing the roadmap from engineer to manager For assistance in constructing the JDBC URL, use the connection string designer built into the Databricks JDBC Driver. You can query and connect to existing Azure SQL Database from Azure Databricks by creating and building a JDBC URL with the relevant credentials. The Overflow Blog Podcast 376: Writing the roadmap from engineer to manager 1. You can try the same workaround for other databases when the query option fails. In the Driver Name box, enter a user-friendly name for the driver.

0 Comments

Leave a Reply. |

AuthorBrad ArchivesCategories |

RSS Feed

RSS Feed